Demystifying Cholesterol

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

All About Cholesterol

When it comes to cholesterol, the most common lay understanding (especially under a certain age) is “it’s bad”.

A more informed view (and more common after a certain age) is “LDL cholesterol is bad; HDL cholesterol is good”.

A more nuanced view is “LDL cholesterol is established as significantly associated with (and almost certainly a causal factor of) atherosclerotic cardiovascular disease and related mortality in men; in women it is less strongly associated and may or may not be a causal factor”

You can read more about that here:

Statins: His & Hers? ← we highly recommend reading this, especially if you are a woman and/or considering/taking statins. To be clear, we’re not saying “don’t take statins!”, because they might be the right medical choice for you and we’re not your doctors. But we are saying: here’s something to at least know about and consider.

Beyond HDL & LDL

There is also VLDL cholesterol, which as you might have guessed, stands for “very low-density lipoprotein”. It has a high, unhealthy triglyceride content, and it increases atherosclerotic plaque. In other words, it hardens your arteries more quickly.

The term “hardening the arteries” is an insufficient descriptor of what’s happening though, because while yes it is hardening the arteries, it’s also narrowing them. Because minerals and detritus passing through in the blood (the latter sounds bad, but there is supposed to be detritus passing through in the blood; it’s got to get out of the body somehow, and it’s off to get filtered and excreted) get stuck in the cholesterol (which itself is a waxy substance, by the way) and before you know it, those minerals and other things have become a solid part of the interior of your artery wall, like a little plastering team came and slapped plaster on the inside of the walls, then when it hardened, slapped more plaster on, and so on. Macrophages (normally the body’s best interior clean-up team) can’t eat things much bigger than themselves, so that means they can’t tackle the build-up of plaque.

Impact on the heart

Narrower less flexible arteries means very poor circulation, which means that organs can start having problems, which obviously includes your heart itself as it is not only having to do a harder job to keep the blood circulating through the narrower blood vessels, but also, it is not immune to also being starved of oxygen and nutrients along with the rest of the body when the circulation isn’t good enough. It’s a catch 22.

What if LDL is low and someone is getting heart disease anyway?

That’s often a case of apolipoprotein B, and unlike lipoprotein A, which is bound to LDL so usually* isn’t a problem if LDL is in “safe” ranges, Apo-B can more often cause problems even when LDL is low. Neither of these are tested for in most standard cholesterol tests by the way, so you might have to ask for them.

*Some people, around 1 in 20 people, have hereditary extra risk factors for this.

What to do about it?

Well, get those lipids tests! Including asking for the LpA and Apo-B tests, especially if you have a history of heart disease in your family, or otherwise know you have a genetic risk factor.

With or without extra genetic risks, it’s good to get lipids tests done annually from 40 onwards (earlier, if you have extra risk factors).

See also: Understanding your cholesterol numbers

Wondering whether you have an increased genetic risk or not?

Genetic Testing: Health Benefits & Methods ← we think this is worth doing; it’s a “one-off test tells many useful things”. Usually done from a saliva sample, but some companies arrange a blood draw instead. Cost is usually quite affordable; do shop around, though.

Additionally, talk to your pharmacist to check whether any of your meds have contraindications or interactions you should be aware of in this regard. Pharmacists usually know contraindications/interactions stuff better than doctors, and/but unlike doctors, they don’t have social pressure on them to know everything, which means that if they’re not sure, instead of just guessing and reassuring you in a confident voice, they’ll actually check.

Lastly, shocking nobody, all the usual lifestyle medicine advice applies here, especially get plenty of moderate exercise and eat a good diet, preferably mostly if not entirely plant-based, and go easy on the saturated fat.

Note: while a vegan diet contains zero dietary cholesterol (because plants don’t make it), vegans can still get unhealthy blood lipid levels, because we are animals and—like most animals—our body is perfectly capable of making its own cholesterol (indeed, we do need some cholesterol to function), and it can make its own in the wrong balance, if for example we go too heavy on certain kinds of (yes, even some plant-based) saturated fat.

Read more: Can Saturated Fats Be Healthy? ← see for example how palm oil and coconut oil are both plant-based, and both high in saturated fat, but palm oil’s is heart-unhealthy on balance, while coconut oil’s is heart-healthy on balance (in moderation).

Want to know more about your personal risk?

Try the American College of Cardiology’s ASCVD risk estimator (it’s free)

Take care!

Don’t Forget…

Did you arrive here from our newsletter? Don’t forget to return to the email to continue learning!

Recommended

Learn to Age Gracefully

Join the 98k+ American women taking control of their health & aging with our 100% free (and fun!) daily emails:

-

Longevity Noodles

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

Noodles may put the “long” into “longevity”, but most of the longevity here comes from the ergothioneine in the mushrooms! The rest of the ingredients are great too though, including the noodles themselves—soba noodles are made from buckwheat, which is not a wheat, nor even a grass (it’s a flowering plant), and does not contain gluten*, but does count as one of your daily portions of grains!

*unless mixed with wheat flour—which it shouldn’t be, but check labels, because companies sometimes cut it with wheat flour, which is cheaper, to increase their profit margin

You will need

- 1 cup (about 9 oz; usually 1 packet) soba noodles

- 6 medium portobello mushrooms, sliced

- 3 kale leaves, de-stemmed and chopped

- 1 shallot, chopped, or ¼ cup chopped onion of any kind

- 1 carrot, diced small

- 1 cup peas

- ½ bulb garlic, minced

- 2 tbsp rice vinegar

- 1 tsp grated fresh ginger

- 1 tsp black pepper, coarse ground

- 1 tsp red chili flakes

- ½ tsp MSG or 1 tbsp low-sodium soy sauce

- Avocado oil, for frying (alternatively: extra virgin olive oil or cold-pressed coconut oil are both perfectly good substitutions)

Method

(we suggest you read everything at least once before doing anything)

1) Cook the soba noodles per the packet instructions, rinse, and set aside

2) Heat a little oil in a skillet, add the shallot, and cook for about 2 minutes.

3) Add the carrot and peas and cook for 3 more minutes.

4) Add the mushrooms, kale, garlic, ginger, peppers, and vinegar, and cook for 1 more minute, stirring well.

5) Add the noodles, as well as the MSG or low-sodium soy sauce, and cook for yet 1 more minute.

6) Serve!

Enjoy!

Want to learn more?

For those interested in some of the science of what we have going on today:

- Rice vs Buckwheat – Which is Healthier?

- The Magic Of Mushrooms: “The Longevity Vitamin” (That’s Not A Vitamin)

- Monosodium Glutamate: Sinless Flavor-Enhancer Or Terrible Health Risk?

- Our Top 5 Spices: How Much Is Enough For Benefits? ← 4/5 of these spices are in today’s dish!

Take care!

Share This Post

-

Celeriac vs Celery – Which is Healthier?

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

Our Verdict

When comparing celeriac to celery, we picked the celeriac.

Why?

Yes, these are essentially the same plant, but there are important nutritional differences:

In terms of macros, celeriac has more than 2x the protein, and slightly more carbs and fiber. Both are very low glycemic index, so the higher protein and fiber makes celeriac the winner in this category.

In the category of vitamins, celeriac has more of vitamins B1, B3, B5, B6, C, E, K, and choline, while celery has more of vitamins A and B9. An easy win for celeriac.

When it comes to minerals, celeriac has more copper, calcium, iron, magnesium, manganese, phosphorus, potassium, selenium, and zinc, while celery is not higher in any minerals. Another obvious win for celeriac.

Adding these sections up makes for a clear overall win for celeriac, but by all means enjoy either or both!

Want to learn more?

You might like to read:

What’s Your Plant Diversity Score?

Take care!

Share This Post

-

When Carbs, Proteins, & Fats Switch Metabolic Roles

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

Strange Things Happening In The Islets Of Langerhans

It is generally known and widely accepted that carbs have the biggest effect on blood sugar levels (and thus insulin response), fats less so, and protein least of all.

And yet, there was a groundbreaking study published yesterday which found:

❝Glucose is the well-known driver of insulin, but we were surprised to see such high variability, with some individuals showing a strong response to proteins, and others to fats, which had never been characterized before.

Insulin plays a major role in human health, in everything from diabetes, where it is too low*, to obesity, weight gain and even some forms of cancer, where it is too high.

These findings lay the groundwork for personalized nutrition that could transform how we treat and manage a range of conditions.❞

*saying ”too low” here is potentially misleading without clarification; yes, Type 1 Diabetics will have too little [endogenous] insulin (because the pancreas is at war with itself and thus isn’t producing useful quantities of insulin, if any). Type 2, however, is more a case of acquired insulin insensitivity, because of having too much at once too often, thus the body stops listening to it, “boy who cried wolf”-style, and the pancreas also starts to get fatigued from producing so much insulin that’s often getting ignored, and does eventually produce less and less while needing more and more insulin to get the same response, so it can be legitimately said “there’s not enough”, but that’s more of a subjective outcome than an objective cause.

Back to the study itself, though…

What they found, and how they found it

Researchers took pancreatic islets from 140 heterogenous donors (varied in age and sex; ostensibly mostly non-diabetic donors, but they acknowledge type 2 diabetes could potentially have gone undiagnosed in some donors*) and tested cell cultures from each with various carbs, proteins, and fats.

They found the expected results in most of the cases, but around 9% responded more strongly to the fats than the carbs (even more strongly than to glucose specifically), and even more surprisingly 8% responded more strongly to the proteins.

*there were also some known type 2 diabetics amongst the donors; as expected, those had a poor insulin response to glucose, but their insulin response to proteins and fats were largely unaffected.

What this means

While this is, in essence, a pilot study (the researchers called for larger and more varied studies, as well as in vivo human studies), the implications so far are important:

It appears that, for a minority of people, a lot of (generally considered very good) antidiabetic advice may not be working in the way previously understood. They’re going to (for example) put fat on their carbs to reduce the blood sugar spike, which will technically still work, but the insulin response is going to be briefly spiked anyway, because of the fats, which very insulin response is what will lower the blood sugars.

In practical terms, there’s not a lot we can do about this at home just yet—even continuous glucose monitors won’t tell us precisely, because they’re monitoring glucose, not the insulin response. We could probably measure everything and do some math and work out what our insulin response has been like based on the pace of change in blood sugar levels (which won’t decrease without insulin to allow such), but even that is at best grounds for a hypothesis for now.

Hopefully, more publicly-available tests will be developed soon, enabling us all to know our “insulin response type” per the proteome predictors discovered in this study, rather than having to just blindly bet on it being “normal”.

Ironically, this very response may have hidden itself for a while—if taking fats raised insulin response without raising blood sugar levels, then if blood sugar levels are the only thing being measured, all we’ll see is “took fats at dinner; blood sugars returned to normal more quickly than when taking carbs without fats”.

You can read the study in full here:

Proteomic predictors of individualized nutrient-specific insulin secretion in health and disease

Want to know more about blood sugar management?

You might like to catch up on:

- 10 Ways To Balance Your Blood Sugars

- Track Your Blood Sugars For Better Personalized Health

- How To Turn Back The Clock On Insulin Resistance

Take care!

Share This Post

Related Posts

-

How do science journalists decide whether a psychology study is worth covering?

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

Complex research papers and data flood academic journals daily, and science journalists play a pivotal role in disseminating that information to the public. This can be a daunting task, requiring a keen understanding of the subject matter and the ability to translate dense academic language into narratives that resonate with the general public.

Several resources and tip sheets, including the Know Your Research section here at The Journalist’s Resource, aim to help journalists hone their skills in reporting on academic research.

But what factors do science journalists look for to decide whether a social science research study is trustworthy and newsworthy? That’s the question researchers at the University of California, Davis, and the University of Melbourne in Australia examine in a recent study, “How Do Science Journalists Evaluate Psychology Research?” published in September in Advances in Methods and Practices in Psychological Science.

Their online survey of 181 mostly U.S.-based science journalists looked at how and whether they were influenced by four factors in fictitious research summaries: the sample size (number of participants in the study), sample representativeness (whether the participants in the study were from a convenience sample or a more representative sample), the statistical significance level of the result (just barely statistically significant or well below the significance threshold), and the prestige of a researcher’s university.

The researchers found that sample size was the only factor that had a robust influence on journalists’ ratings of how trustworthy and newsworthy a study finding was.

University prestige had no effect, while the effects of sample representativeness and statistical significance were inconclusive.

But there’s nuance to the findings, the authors note.

“I don’t want people to think that science journalists aren’t paying attention to other things, and are only paying attention to sample size,” says Julia Bottesini, an independent researcher, a recent Ph.D. graduate from the Psychology Department at UC Davis, and the first author of the study.

Overall, the results show that “these journalists are doing a very decent job” vetting research findings, Bottesini says.

Also, the findings from the study are not generalizable to all science journalists or other fields of research, the authors note.

“Instead, our conclusions should be circumscribed to U.S.-based science journalists who are at least somewhat familiar with the statistical and replication challenges facing science,” they write. (Over the past decade a series of projects have found that the results of many studies in psychology and other fields can’t be reproduced, leading to what has been called a ‘replication crisis.’)

“This [study] is just one tiny brick in the wall and I hope other people get excited about this topic and do more research on it,” Bottesini says.

More on the study’s findings

The study’s findings can be useful for researchers who want to better understand how science journalists read their research and what kind of intervention — such as teaching journalists about statistics — can help journalists better understand research papers.

“As an academic, I take away the idea that journalists are a great population to try to study because they’re doing something really important and it’s important to know more about what they’re doing,” says Ellen Peters, director of Center for Science Communication Research at the School of Journalism and Communication at the University of Oregon. Peters, who was not involved in the study, is also a psychologist who studies human judgment and decision-making.

Peters says the study was “overall terrific.” She adds that understanding how journalists do their work “is an incredibly important thing to do because journalists are who reach the majority of the U.S. with science news, so understanding how they’re reading some of our scientific studies and then choosing whether to write about them or not is important.”

The study, conducted between December 2020 and March 2021, is based on an online survey of journalists who said they at least sometimes covered science or other topics related to health, medicine, psychology, social sciences, or well-being. They were offered a $25 Amazon gift card as compensation.

Among the participants, 77% were women, 19% were men, 3% were nonbinary and 1% preferred not to say. About 62% said they had studied physical or natural sciences at the undergraduate level, and 24% at the graduate level. Also, 48% reported having a journalism degree. The study did not include the journalists’ news reporting experience level.

Participants were recruited through the professional network of Christie Aschwanden, an independent journalist and consultant on the study, which could be a source of bias, the authors note.

“Although the size of the sample we obtained (N = 181) suggests we were able to collect a range of perspectives, we suspect this sample is biased by an ‘Aschwanden effect’: that science journalists in the same professional network as C. Aschwanden will be more familiar with issues related to the replication crisis in psychology and subsequent methodological reform, a topic C. Aschwanden has covered extensively in her work,” they write.

Participants were randomly presented with eight of 22 one-paragraph fictitious social and personality psychology research summaries with fictitious authors. The summaries are posted on Open Science Framework, a free and open-source project management tool for researchers by the Center for Open Science, with a mission to increase openness, integrity and reproducibility of research.

For instance, one of the vignettes reads:

“Scientists at Harvard University announced today the results of a study exploring whether introspection can improve cooperation. 550 undergraduates at the university were randomly assigned to either do a breathing exercise or reflect on a series of questions designed to promote introspective thoughts for 5 minutes. Participants then engaged in a cooperative decision-making game, where cooperation resulted in better outcomes. People who spent time on introspection performed significantly better at these cooperative games (t (548) = 3.21, p = 0.001). ‘Introspection seems to promote better cooperation between people,’ says Dr. Quinn, the lead author on the paper.”

In addition to answering multiple-choice survey questions, participants were given the opportunity to answer open-ended questions, such as “What characteristics do you [typically] consider when evaluating the trustworthiness of a scientific finding?”

Bottesini says those responses illuminated how science journalists analyze a research study. Participants often mentioned the prestige of the journal in which it was published or whether the study had been peer-reviewed. Many also seemed to value experimental research designs over observational studies.

Considering statistical significance

When it came to considering p-values, “some answers suggested that journalists do take statistical significance into account, but only very few included explanations that suggested they made any distinction between higher or lower p values; instead, most mentions of p values suggest journalists focused on whether the key result was statistically significant,” the authors write.

Also, many participants mentioned that it was very important to talk to outside experts or researchers in the same field to get a better understanding of the finding and whether it could be trusted, the authors write.

“Journalists also expressed that it was important to understand who funded the study and whether the researchers or funders had any conflicts of interest,” they write.

Participants also “indicated that making claims that were calibrated to the evidence was also important and expressed misgivings about studies for which the conclusions do not follow from the evidence,” the authors write.

In response to the open-ended question, “What characteristics do you [typically] consider when evaluating the trustworthiness of a scientific finding?” some journalists wrote they checked whether the study was overstating conclusions or claims. Below are some of their written responses:

- “Is the researcher adamant that this study of 40 college kids is representative? If so, that’s a red flag.”

- “Whether authors make sweeping generalizations based on the study or take a more measured approach to sharing and promoting it.”

- “Another major point for me is how ‘certain’ the scientists appear to be when commenting on their findings. If a researcher makes claims which I consider to be over-the-top about the validity or impact of their findings, I often won’t cover.”

- “I also look at the difference between what an experiment actually shows versus the conclusion researchers draw from it — if there’s a big gap, that’s a huge red flag.”

Peters says the study’s findings show that “not only are journalists smart, but they have also gone out of their way to get educated about things that should matter.”

What other research shows about science journalists

A 2023 study, published in the International Journal of Communication, based on an online survey of 82 U.S. science journalists, aims to understand what they know and think about open-access research, including peer-reviewed journals and articles that don’t have a paywall, and preprints. Data was collected between October 2021 and February 2022. Preprints are scientific studies that have yet to be peer-reviewed and are shared on open repositories such as medRxiv and bioRxiv. The study finds that its respondents “are aware of OA and related issues and make conscious decisions around which OA scholarly articles they use as sources.”

A 2021 study, published in the Journal of Science Communication, looks at the impact of the COVID-19 pandemic on the work of science journalists. Based on an online survey of 633 science journalists from 77 countries, it finds that the pandemic somewhat brought scientists and science journalists closer together. “For most respondents, scientists were more available and more talkative,” the authors write. The pandemic has also provided an opportunity to explain the scientific process to the public, and remind them that “science is not a finished enterprise,” the authors write.

More than a decade ago, a 2008 study, published in PLOS Medicine, and based on an analysis of 500 health news stories, found that “journalists usually fail to discuss costs, the quality of the evidence, the existence of alternative options, and the absolute magnitude of potential benefits and harms,” when reporting on research studies. Giving time to journalists to research and understand the studies, giving them space for publication and broadcasting of the stories, and training them in understanding academic research are some of the solutions to fill the gaps, writes Gary Schwitzer, the study author.

Advice for journalists

We asked Bottesini, Peters, Aschwanden and Tamar Wilner, a postdoctoral fellow at the University of Texas, who was not involved in the study, to share advice for journalists who cover research studies. Wilner is conducting a study on how journalism research informs the practice of journalism. Here are their tips:

1. Examine the study before reporting it.

Does the study claim match the evidence? “One thing that makes me trust the paper more is if their interpretation of the findings is very calibrated to the kind of evidence that they have,” says Bottesini. In other words, if the study makes a claim in its results that’s far-fetched, the authors should present a lot of evidence to back that claim.

Not all surprising results are newsworthy. If you come across a surprising finding from a single study, Peters advises you to step back and remember Carl Sagan’s quote: “Extraordinary claims require extraordinary evidence.”

How transparent are the authors about their data? For instance, are the authors posting information such as their data and the computer codes they use to analyze the data on platforms such as Open Science Framework, AsPredicted, or The Dataverse Project? Some researchers ‘preregister’ their studies, which means they share how they’re planning to analyze the data before they see them. “Transparency doesn’t automatically mean that a study is trustworthy,” but it gives others the chance to double-check the findings, Bottesini says.

Look at the study design. Is it an experimental study or an observational study? Observational studies can show correlations but not causation.

“Observational studies can be very important for suggesting hypotheses and pointing us towards relationships and associations,” Aschwanden says.

Experimental studies can provide stronger evidence toward a cause, but journalists must still be cautious when reporting the results, she advises. “If we end up implying causality, then once it’s published and people see it, it can really take hold,” she says.

Know the difference between preprints and peer-reviewed, published studies. Peer-reviewed papers tend to be of higher quality than those that are not peer-reviewed. Read our tip sheet on the difference between preprints and journal articles.

Beware of predatory journals. Predatory journals are journals that “claim to be legitimate scholarly journals, but misrepresent their publishing practices,” according to a 2020 journal article, published in the journal Toxicologic Pathology, “Predatory Journals: What They Are and How to Avoid Them.”

2. Zoom in on data.

Read the methods section of the study. The methods section of the study usually appears after the introduction and background section. “To me, the methods section is almost the most important part of any scientific paper,” says Aschwanden. “It’s amazing to me how often you read the design and the methods section, and anyone can see that it’s a flawed design. So just giving things a gut-level check can be really important.”

What’s the sample size? Not all good studies have large numbers of participants but pay attention to the claims a study makes with a small sample size. “If you have a small sample, you calibrate your claims to the things you can tell about those people and don’t make big claims based on a little bit of evidence,” says Bottesini.

But also remember that factors such as sample size and p-value are not “as clear cut as some journalists might assume,” says Wilner.

How representative of a population is the study sample? “If the study has a non-representative sample of, say, undergraduate students, and they’re making claims about the general population, that’s kind of a red flag,” says Bottesini. Aschwanden points to the acronym WEIRD, which stands for “Western, Educated, Industrialized, Rich, and Democratic,” and is used to highlight a lack of diversity in a sample. Studies based on such samples may not be generalizable to the entire population, she says.

Look at the p-value. Statistical significance is both confusing and controversial, but it’s important to consider. Read our tip sheet, “5 Things Journalists Need to Know About Statistical Significance,” to better understand it.

3. Talk to scientists not involved in the study.

If you’re not sure about the quality of a study, ask for help. “Talk to someone who is an expert in study design or statistics to make sure that [the study authors] use the appropriate statistics and that methods they use are appropriate because it’s amazing to me how often they’re not,” says Aschwanden.

Get an opinion from an outside expert. It’s always a good idea to present the study to other researchers in the field, who have no conflicts of interest and are not involved in the research you’re covering and get their opinion. “Don’t take scientists at their word. Look into it. Ask other scientists, preferably the ones who don’t have a conflict of interest with the research,” says Bottesini.

4. Remember that a single study is simply one piece of a growing body of evidence.

“I have a general rule that a single study doesn’t tell us very much; it just gives us proof of concept,” says Peters. “It gives us interesting ideas. It should be retested. We need an accumulation of evidence.”

Aschwanden says as a practice, she tries to avoid reporting stories about individual studies, with some exceptions such as very large, randomized controlled studies that have been underway for a long time and have a large number of participants. “I don’t want to say you never want to write a single-study story, but it always needs to be placed in the context of the rest of the evidence that we have available,” she says.

Wilner advises journalists to spend some time looking at the scope of research on the study’s specific topic and learn how it has been written about and studied up to that point.

“We would want science journalists to be reporting balance of evidence, and not focusing unduly on the findings that are just in front of them in a most recent study,” Wilner says. “And that’s a very difficult thing to as journalists to do because they’re being asked to make their article very newsy, so it’s a difficult balancing act, but we can try and push journalists to do more of that.”

5. Remind readers that science is always changing.

“Science is always two steps forward, one step back,” says Peters. Give the public a notion of uncertainty, she advises. “This is what we know today. It may change tomorrow, but this is the best science that we know of today.”

Aschwanden echoes the sentiment. “All scientific results are provisional, and we need to keep that in mind,” she says. “It doesn’t mean that we can’t know anything, but it’s very important that we don’t overstate things.”

Authors of a study published in PNAS in January analyzed more than 14,000 psychology papers and found that replication success rates differ widely by psychology subfields. That study also found that papers that could not be replicated received more initial press coverage than those that could.

The authors note that the media “plays a significant role in creating the public’s image of science and democratizing knowledge, but it is often incentivized to report on counterintuitive and eye-catching results.”

Ideally, the news media would have a positive relationship with replication success rates in psychology, the authors of the PNAS study write. “Contrary to this ideal, however, we found a negative association between media coverage of a paper and the paper’s likelihood of replication success,” they write. “Therefore, deciding a paper’s merit based on its media coverage is unwise. It would be valuable for the media to remind the audience that new and novel scientific results are only food for thought before future replication confirms their robustness.”

Additional reading

Uncovering the Research Behaviors of Reporters: A Conceptual Framework for Information Literacy in Journalism

Katerine E. Boss, et al. Journalism & Mass Communication Educator, October 2022.The Problem with Psychological Research in the Media

Steven Stosny. Psychology Today, September 2022.Critically Evaluating Claims

Megha Satyanarayana, The Open Notebook, January 2022.How Should Journalists Report a Scientific Study?

Charles Binkley and Subramaniam Vincent. Markkula Center for Applied Ethics at Santa Clara University, September 2020.What Journalists Get Wrong About Social Science: Full Responses

Brian Resnick. Vox, January 2016.From The Journalist’s Resource

8 Ways Journalists Can Access Academic Research for Free

5 Things Journalists Need to Know About Statistical Significance

5 Common Research Designs: A Quick Primer for Journalists

5 Tips for Using PubPeer to Investigate Scientific Research Errors and Misconduct

What’s Standard Deviation? 4 Things Journalists Need to Know

This article first appeared on The Journalist’s Resource and is republished here under a Creative Commons license.

Don’t Forget…

Did you arrive here from our newsletter? Don’t forget to return to the email to continue learning!

Learn to Age Gracefully

Join the 98k+ American women taking control of their health & aging with our 100% free (and fun!) daily emails:

-

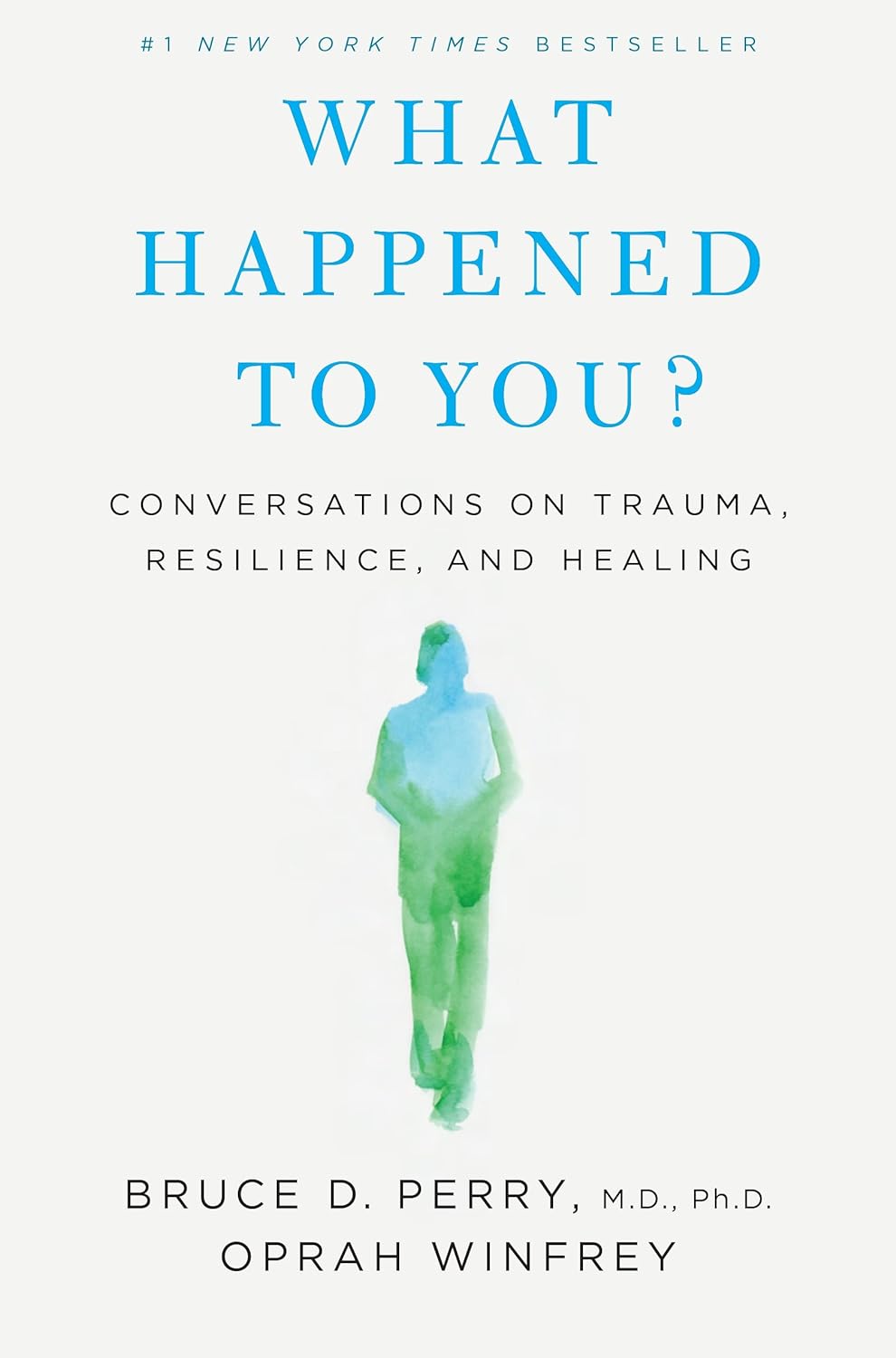

What Happened to You? – by Dr. Bruce Perry and Oprah Winfrey

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

The very title “What Happened To You?” starts with an assumption that the reader has suffered trauma. This is not just a sample bias of “a person who picks up a book about healing from trauma has probably suffered trauma”, but is also a statistically safe assumption. Around 60% of adults report having suffered some kind of serious trauma.

The authors examine, as the subtitle suggests, these matters in three parts:

- Trauma

- Resilience

- Healing

Trauma can take many forms; sometimes it is a very obvious dramatic traumatic event; sometimes less so. Sometimes it can be a mountain of small things that eroded our strength leaving us broken. But what then, of resilience?

Resilience (in psychology, anyway) is not imperviousness; it is the ability to suffer and recover from things.

Healing is the tail-end part of that. When we have undergone trauma, displayed whatever amount of resilience we could at the time, and now have outgrown our coping strategies and looking to genuinely heal.

The authors present many personal stories and case studies to illustrate different kinds of trauma and resilience, and then go on to outline what we can do to grow from there.

Bottom line: if you or a loved one has suffered trauma, this book may help a lot in understanding and processing that, and finding a way forwards from it.

Click here to check out “What Happened To You?” and give yourself what you deserve.

Don’t Forget…

Did you arrive here from our newsletter? Don’t forget to return to the email to continue learning!

Learn to Age Gracefully

Join the 98k+ American women taking control of their health & aging with our 100% free (and fun!) daily emails:

-

Nicotine pouches are being marketed to young people on social media. But are they safe, or even legal?

10almonds is reader-supported. We may, at no cost to you, receive a portion of sales if you purchase a product through a link in this article.

Flavoured nicotine pouches are being promoted to young people on social media platforms such as TikTok and Instagram.

Although some viral videos have been taken down following a series of reports in The Guardian, clips featuring Australian influencers have claimed nicotine pouches are a safe and effective way to quit vaping. A number of the videos have included links to websites selling these products.

With the rapid rise in youth vaping and the subsequent implementation of several reforms to restrict access to vaping products, it’s not entirely surprising the tobacco industry is introducing more products to maintain its future revenue stream.

The major trans-national tobacco companies, including Philip Morris International and British American Tobacco, all manufacture nicotine pouches. British American Tobacco’s brand of nicotine pouches, Velo, is a leading sponsor of the McLaren Formula 1 team.

But what are nicotine pouches, and are they even legal in Australia?

Like snus, but different

Nicotine pouches are available in many countries around the world, and their sales are increasing rapidly, especially among young people.

Nicotine pouches look a bit like small tea bags and are placed between the lip and gum. They’re typically sold in small, colourful tins of about 15 to 20 pouches. While the pouches don’t contain tobacco, they do contain nicotine that is either extracted from tobacco plants or made synthetically. The pouches come in a wide range of strengths.

As well as nicotine, the pouches commonly contain plant fibres (in place of tobacco, plant fibres serve as a filler and give the pouches shape), sweeteners and flavours. Just like for vaping products, there’s a vast array of pouch flavours available including different varieties of fruit, confectionery, spices and drinks.

The range of appealing flavours, as well as the fact they can be used discreetly, may make nicotine pouches particularity attractive to young people.

Vaping has recently been subject to tighter regulation in Australia.

Aleksandr Yu/ShutterstockUsers absorb the nicotine in their mouths and simply replace the pouch when all the nicotine has been absorbed. Tobacco-free nicotine pouches are a relatively recent product, but similar style products that do contain tobacco, known as snus, have been popular in Scandinavian countries, particularly Sweden, for decades.

Snus and nicotine pouches are however different products. And given snus contains tobacco and nicotine pouches don’t, the products are subject to quite different regulations in Australia.

What does the law say?

Pouches that contain tobacco, like snus, have been banned in Australia since 1991, as part of a consumer product ban on all forms of smokeless tobacco products. This means other smokeless tobacco products such as chewing tobacco, snuff, and dissolvable tobacco sticks or tablets, are also banned from sale in Australia.

Tobacco-free nicotine pouches cannot legally be sold by general retailers, like tobacconists and convenience stores, in Australia either. But the reasons for this are more complex.

In Australia, under the Poisons Standard, nicotine is a prescription-only medicine, with two exceptions. Nicotine can be used in tobacco prepared and packed for smoking, such as cigarettes, roll-your-own tobacco, and cigars, as well as in preparations for therapeutic use as a smoking cessation aid, such as nicotine patches, gum, mouth spray and lozenges.

If a nicotine-containing product does not meet either of these two exceptions, it cannot be legally sold by general retailers. No nicotine pouches have currently been approved by the Therapeutic Goods Administration as a therapeutic aid in smoking cessation, so in short they’re not legal to sell in Australia.

However, nicotine pouches can be legally imported for personal use only if users have a prescription from a medical professional who can assess if the product is appropriate for individual use.

We only have anecdotal reports of nicotine pouch use, not hard data, as these products are very new in Australia. But we do know authorities are increasingly seizing these products from retailers. It’s highly unlikely any young people using nicotine pouches are accessing them through legal channels.

Health concerns

Nicotine exposure may induce effects including dizziness, headache, nausea and abdominal cramps, especially among people who don’t normally smoke or vape.

Although we don’t yet have much evidence on the long term health effects of nicotine pouches, we know nicotine is addictive and harmful to health. For example, it can cause problems in the cardiovascular system (such as heart arrhythmia), particularly at high doses. It may also have negative effects on adolescent brain development.

The nicotine contents of some of the nicotine pouches on the market is alarmingly high. Certain brands offer pouches containing more than 10mg of nicotine, which is similar to a cigarette. According to a World Health Organization (WHO) report, pouches deliver enough nicotine to induce and sustain nicotine addiction.

Pouches are also being marketed as a product to use when it’s not possible to vape or smoke, such as on a plane. So instead of helping a person quit they may be used in addition to smoking and vaping. And importantly, there’s no clear evidence pouches are an effective smoking or vaping cessation aid.

A Velo product display at Dubai airport in October 2022. Nicotine pouches are marketed as safe to use on planes.

Becky FreemanFurther, some nicotine pouches, despite being tobacco-free, still contain tobacco-specific nitrosamines. These compounds can damage DNA, and with long term exposure, can cause cancer.

Overall, there’s limited data on the harms of nicotine pouches because they’ve been on the market for only a short time. But the WHO recommends a cautious approach given their similarities to smokeless tobacco products.

For anyone wanting advice and support to quit smoking or vaping, it’s best to talk to your doctor or pharmacist, or access trusted sources such as Quitline or the iCanQuit website.

Becky Freeman, Associate Professor, School of Public Health, University of Sydney

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Don’t Forget…

Did you arrive here from our newsletter? Don’t forget to return to the email to continue learning!

Learn to Age Gracefully

Join the 98k+ American women taking control of their health & aging with our 100% free (and fun!) daily emails: